The Silent Meeting challenge at NorthSec 2018 was worth 20 points with only four flags. For this CTF, 20 points is a lot. And there’s a reason: this challenge went out of the box and literally asked you to recover what music was being played from an audioless video of a loudspeaker, and what did people say by looking at the vibrations of a BAG OF CHIPS! Like wuuut?!

At NorthSec, I’ve been the captain of the X-Men team for several years. The team is primarily composed of Concordia University (Montreal, Canada) students, ex-students, and accointances. For this challenge, I worked with my girlfriend who persisted in trying various tools.

This challenge was actually inspired from the research done at the MIT in 2014 by Davis et al. that shows it’s possible to interpret tiny oscillations of objects filmed with a high frame rate and turn them back into sound waves. This enables someone who can only observe objects near speaking people to recover their dialogue. Indeed, when someone speaks, the waves they create in the air put pressure on the objects they encounter (like your eardrums, or plant leaves), making them oscillate slightly according to the waves.

Check this video for a quick explanation and demo:

Context

The challenge comes with the following introduction:

You joined the activits group NOYB (None Of Your Business) who are fighting against the increased national surveillance and tracking of citizens from the British gouvernment.

Your first task is to spy on the the Ministry of Housing, Communities and Local Government who is concocting a plan to set up an colony on Mars on which to send the non-conforming citizens based on the merit points on thir record. The plan is to listen in on the conversations held at 2 Marsham Street from a remote location in line of sight. In the past, the NOYB tried techniques such as the parabolic microphone and a laser microphone but these failed due to the Ministry playing music at their exterior glass to counteract this type of spying. But now, NOYB received from their counterpart on Artemis an ultra performant telephoto lens with glass made of ZAFO, a crystalline quartz-like structure that only forms at 0.216 of Earth gravity.

Before getting all of the details of the operation, you need to prove your wit to the group on a video with no sound, taken in a controlled environment, with the same camera that will be used for the actual mission. This camera records video at a mind boggling 2400 fps. The files that you are provided with have been slowed down 100 times.

NorthSec 2018 – Silent Meeting challenge intro

Question 1

The first video (〰.mp4) shows a loudspeaker on slow motion:

We solved this question in the dumbest way possible: just visually count the beats from the loudspeaker by playing the video at 50% speed to have enough time to keep track of them, then divide by the duration of the video. The beats were regular, confirming there was only one main frequency.

We counted about 113 beats over the 25.708 seconds of the video. As the video has been slowed down 100x according to the instructions, that means the real duration was 0.25708s. That leaves us with a tone frequency of 113/0.25708, which is about 439.55Hz. Let’s say it’s close enough to 440Hz, which is the standard A4.

The flag FLAG-freq1_440 gave us 4 points! That was given…

Question 2

A theoretic question that will bring you back to your signal processing courses, have you had any.

The Shannon’s sampling theorem states that when you want to sample a signal, i.e., convert the analog continuous signal to a discrete digital signal, your sampling frequency needs to be at least twice as fast as the highest frequency in the continuous signal you want to sample. If you pick a lower sampling frequency, your discrete signal will not fully characterize the continuous signal (you lose information).

In other words, if you record the state of a wave twice per second (that is, at a frequency of 2Hz), you will not be able to accurately record any frequency higher than 1Hz. This has to do with the shape of a sinusoidal wave, but can be proven mathematically as well (hence it’s a theorem).

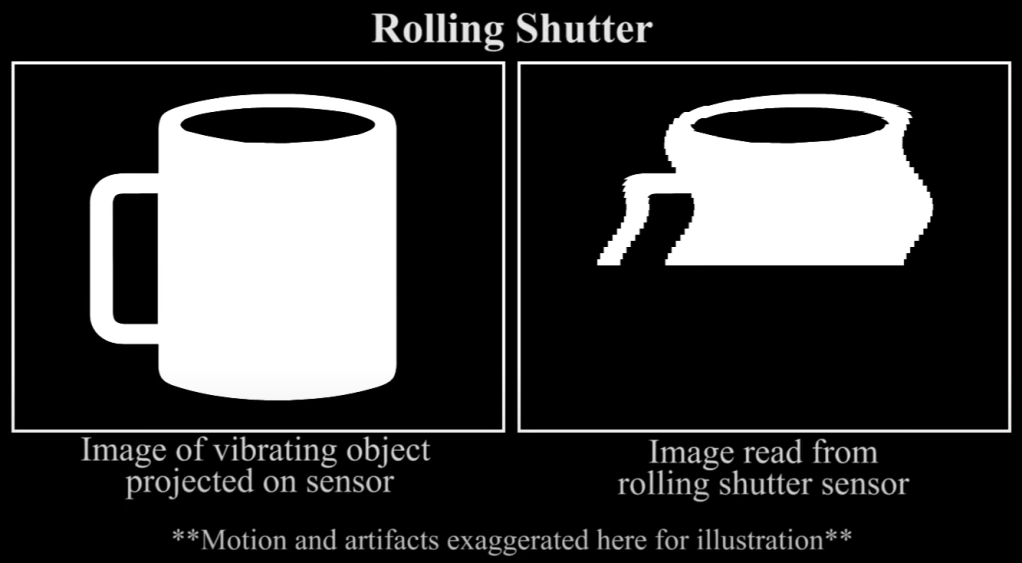

The rolling shutter effect is mentioned in the MIT’s video: when an object moves as you record it with your camera, a single video frame will capture various positions of the object in each row (due to the time it took for the camera sensor to record what it saw). This could increase the maximum frequency you can recover from a video.

Therefore in our case, without the rolling shutter effect, we are bound by Shannon’s sampling theorem. At 2400 fps, we can recover all sounds that make objects move at less than 2400/2 = 1200 Hz.

The flag FLAG-freq2_1200 was worth 1 point.

Question 3

For this question, the previous loudspeaker was playing a song (presumably), not a single tone. Manually counting the beats was going to be difficult…

So we searched for a tool that could convert a video into sound. That’s a weird thing to look for… Obvious no quick Google searches can give us what we really want. We don’t want to extract the audio of a video file, or convert the format. We want to “interpret” the audio that’s “visible” from the video.

We first started in the wrong direction. Given that the vibrations were sometimes small for a naked eye, especially in the next video, we somewhat landed on this MIT project that aims at “magnifying” details in videos: https://people.csail.mit.edu/mrub/vidmag/. We tried to run their code but didn’t understand what we were looking at…

On the right track, let’s look at what the MIT researchers left behind them. On the project’s website, we can find the research paper, along with the Matlab code to do the video -> audio job.

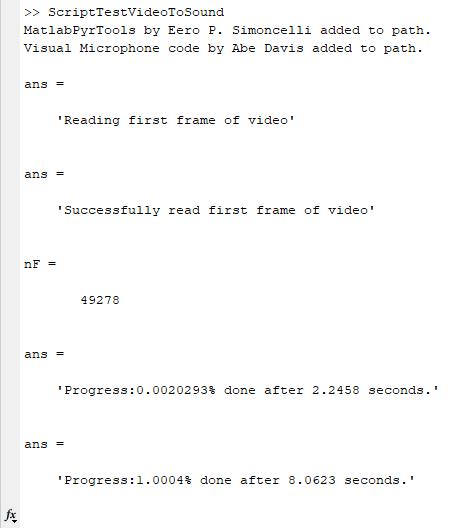

It happened that we had access to a university computer with Matlab installed, so we tried the code. We simply need to adapt the vidName and vidExtension variables to point to 🎶.mp4 (it is recommended to rename this file first…), then let the program run:

When prompted to select a folder, pick where you want the recovered .wav to be saved.

The program takes a while to run, and the suspense became unbearable, especially as we were running the code at 5am the last day of the CTF, after we actually tried other codes from different authors that totally didn’t work…

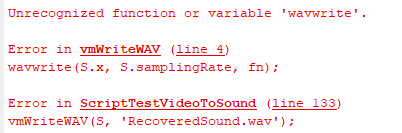

Six long minutes of intense CPU activity later, boom. Matlab error. Also, we got a spectrogram of the recovered sound, and it looks like we’ve got something!

Don’t panic, the Matlab error is due to a function name change. Instead of wavwrite, it now should use audiowrite.

In vmWriteWAV.m, replace wavwrite(S.x, S.samplingRate, fn); by audiowrite(fn, S.x, S.samplingRate); (be careful, the argument order changed). You actually don’t need to rerun the whole program, actually simple run audiowrite("recoveredSound.wav", S.x, S.samplingRate); since S is already in your environment now. Here is the recovered sound:

What a joy when we could hear the recovered melody! I immediately recognized Vangelis – Conquest of paradise, featured in the 1992 film 1492: Conquest of Paradise.

We prepared the flag FLAG-song_conquestofparadise, submitted it in the morning at the CTF and got 6 points!

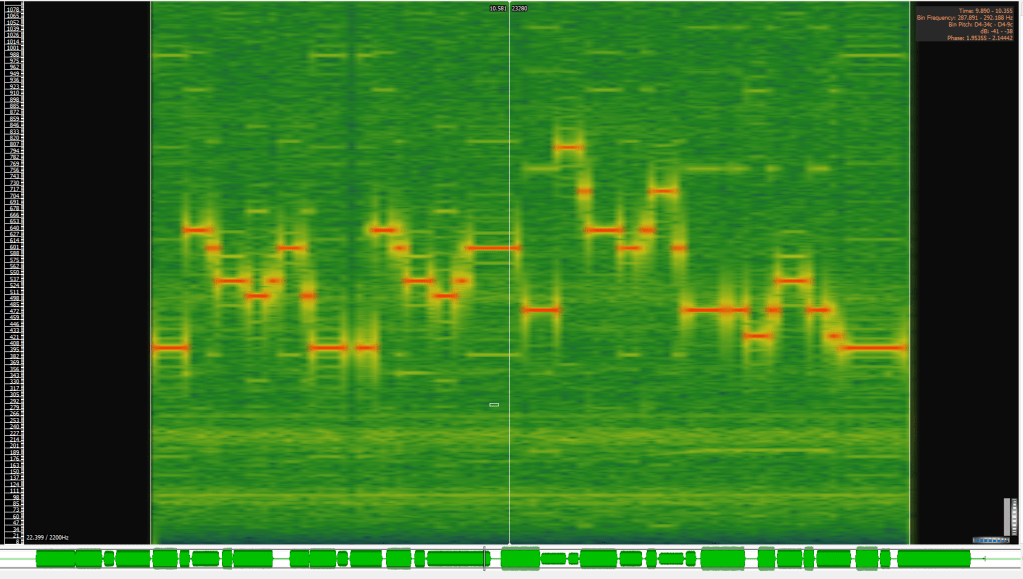

Question 4

Last but not least, we are now tackling the real deal in audio recovery:

The video indeed features a bag a chips:

Since we got the program running for the previous question, why not simply throw it the new video?

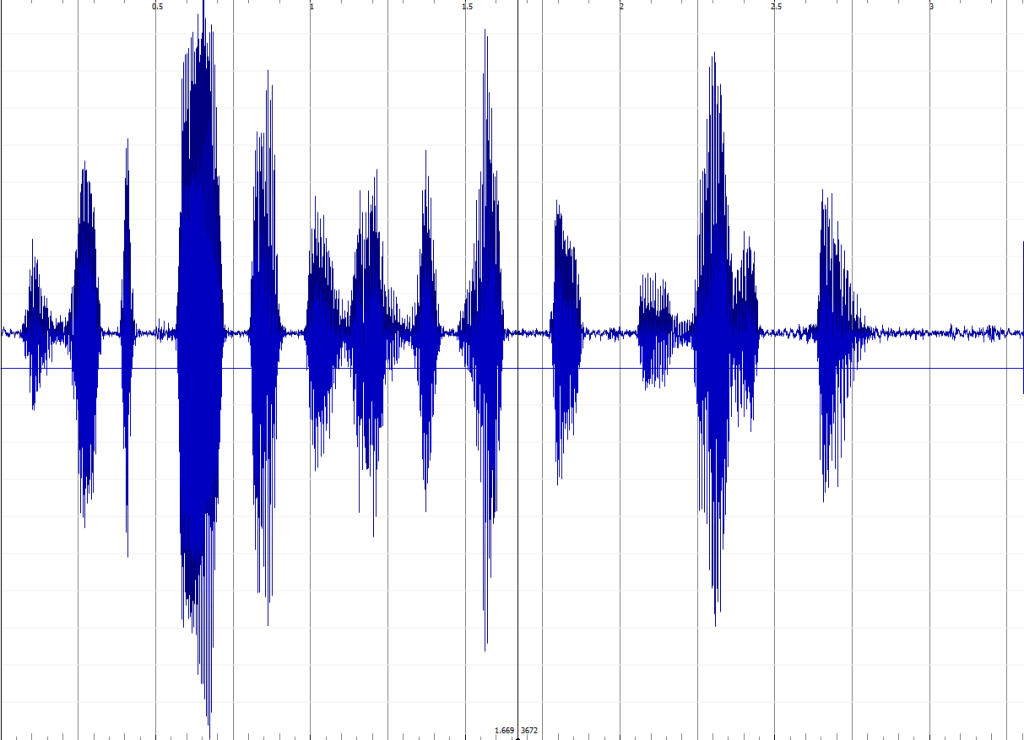

1m30 of CPU time, and an audio file:

The most difficult part in this question was to understand what the heck was being spoken…

We listened to the sample several times. An audiophile team member tweaked it in various ways to finally understand that the sentence was:

WE WILL DEPORT EVERYONE WITH LESS THAN TWO MERIT POINTS

This was actually related to the challenge’s context, talking about merit points:

Your first task is to spy on the the Ministry of Housing, Communities and Local Government who is concocting a plan to set up an colony on Mars on which to send the non-conforming citizens based on the merit points on thir record.

FLAG-deport_everyonewithlessthantwomeritpoints gave us 9 points!

4+1+6+9=20 points, and voilà! With the right tool, it wasn’t that hard.

What approach did the speaker’s girlfriend take when faced with challenges during the collaboration?

LikeLike